AI Integration That Works With Your Existing Systems, Not Against Them

Embed AI capabilities into the applications, workflows, and infrastructure you already operate. No rip-and-replace. Practical AI that delivers measurable value within your current technology environment.

Why AI Integration Needs Engineering, Not Just Consulting

Most companies want AI to improve what they already have, not rebuild from scratch. We connect AI to your existing systems through clean APIs and data pipelines - not slide decks.

Traditional AI Consulting

Assessment & Recommendations

Weeks of discovery producing a strategy deck. Use-case prioritization matrices. Roadmaps with no working code attached.

Proof of Concept

Isolated PoC built on clean sample data. Works in a sandbox. Disconnected from your production systems and real data quality.

Custom Build from Scratch

Rip-and-replace approach. Existing systems treated as legacy. Months of development before any integration touches production.

Integration as Afterthought

Connecting the AI model to your systems happens last. Data format mismatches, latency issues, and authentication problems surface late.

Handoff & Documentation

Project delivered. Consultants leave. Your team inherits a system they did not build and cannot easily maintain or optimize.

AI-Native Engineering Approach

API-First Integration Design

Start with your existing systems. Map data flows, identify integration points, and design AI capabilities that connect - not replace.

Data Pipeline Engineering

Build the data pipelines that feed models and return results to production systems. Handle data quality, format, and latency from day one.

AI Layer Into Existing Workflows

AI capabilities deployed as services your existing applications call. Your systems stay intact. AI adds intelligence without disruption.

Cost & Performance Monitoring

API costs tracked, model performance measured, and integration health monitored. We watch our own bills — we will watch yours.

Your Team Owns the System

Built to be maintained by your team, not dependent on us. Clean code, documentation, and knowledge transfer from engineers, not consultants.

What We Build

LLM Integration

Connect OpenAI, Claude, or Gemini models to your application for intelligent search, content generation, summarization, and natural language processing.

Predictive Analytics & ML Models

Custom models for demand forecasting, churn prediction, pricing optimization, anomaly detection. Built on your data, deployed in your environment.

Computer Vision

Image classification, object detection, OCR, visual inspection. Applications in infrastructure planning, document processing, quality control.

Data Pipeline Development

ETL pipelines that prepare your data for AI consumption. Cleansing, transformation, enrichment from multiple sources into AI-ready formats.

AI-Powered Search & Recommendations

Semantic search using vector embeddings and recommendation engines that understand intent, not just keywords.

Legacy System AI Augmentation

Add AI to existing applications without rewriting them. API middleware, microservices, progressive enhancement.

Technology We Work With

Our Work

Integrated three AI features that reduced compliance effort by 97% for 15,000+ learners

97%

Compliance Effort Reduction

25%

Faster English Acquisition

15,000+

Multilingual Support for 15k+ Users

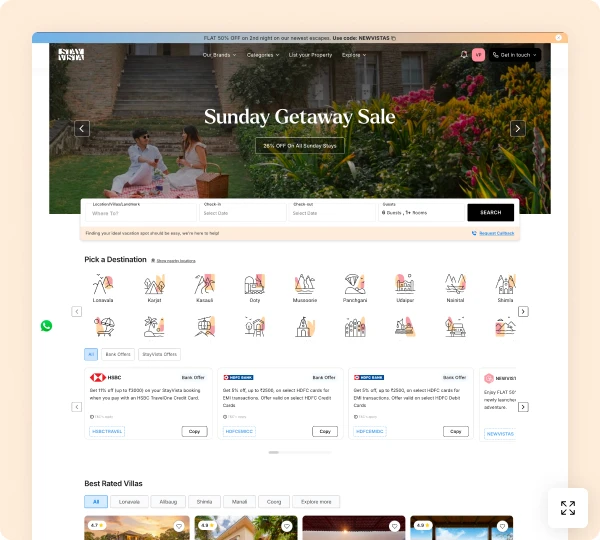

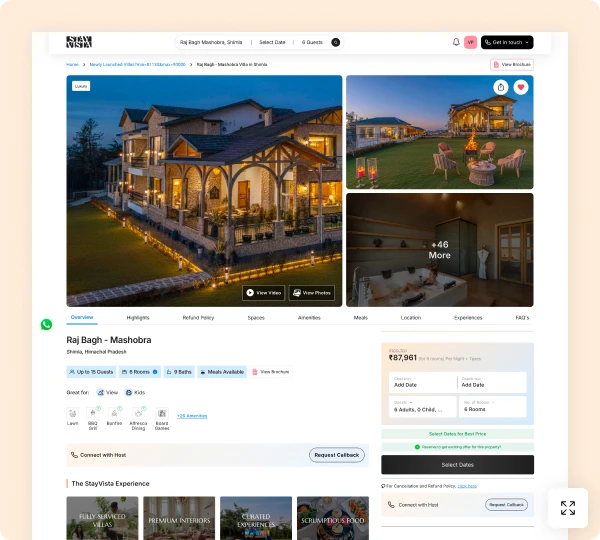

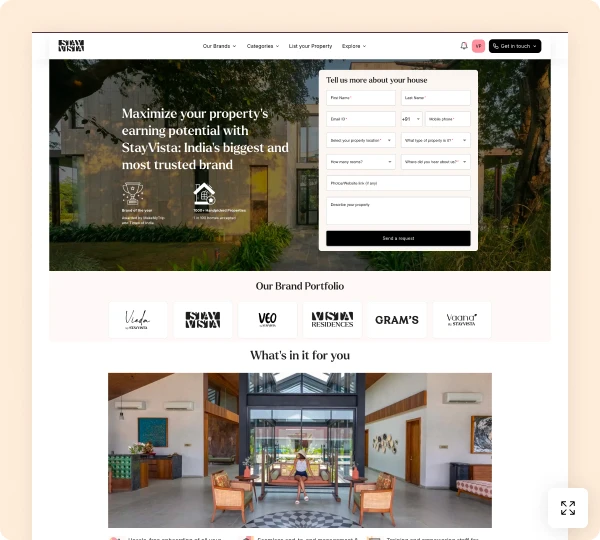

Increased booking conversion by 20% with AI-powered property matching

60%

Higher Booking Conversion

35%

More Property Views

1000+

Personalized Matching from 1,000+ Properties

Achieved 92% detection accuracy scanning property photos for 15 asset categories

92%

Detection Accuracy

70%

Faster Property Audits

15

Asset Categories Classified